Key Takeaways:

- Soft guardrails alone cannot stop agentic AI attacks. The PerplexedComet zero-click exploit proved that probabilistic, prompt-level protections fail when autonomous agents merge user intent with malicious instructions.

- Hard boundaries are the only reliable prevention layer in agentic AI security. Deterministic, code-level controls, such as blocking file:// access, remove dangerous capabilities entirely, preventing data exfiltration regardless of model behavior.

- Prompt injection is a structural risk, not a patchable bug. LLMs do not reliably distinguish between trusted instructions and untrusted input, making architectural constraints essential for secure deployment.

- Defense-in-depth means boundaries first, guardrails second. Hard boundaries provide prevention; soft guardrails provide detection, visibility, and friction, but detection after exfiltration is not security.

- Agentic AI must follow established cybersecurity principles — least privilege, zero trust, attack surface reduction, and environment-level enforcement — to achieve secure-by-design implementation in enterprise and consumer systems alike.

One recurring theme in my research and writing on agentic AI security has been the distinction between soft guardrails and hard boundaries. As someone who serves on the Distinguished Review Board for the OWASP Agentic Top 10, and who spends every day thinking about how to secure agents across enterprise environments at Zenity, this distinction is not academic. It is potentially the single most important conceptual framework practitioners need to internalize right now.

And now we have the perfect real-world proof point. Recently, Zenity Labs published research documenting PerplexedComet, a zero-click attack against Perplexity's Comet agentic browser that caused full exfiltration of local files from a user's personal machine. More importantly, the way Perplexity responded to our responsible disclosure demonstrates precisely why hard boundaries are the right architectural answer, and why soft guardrails alone will never be enough.

The Research Consensus: Soft Guardrails Are Not Enough

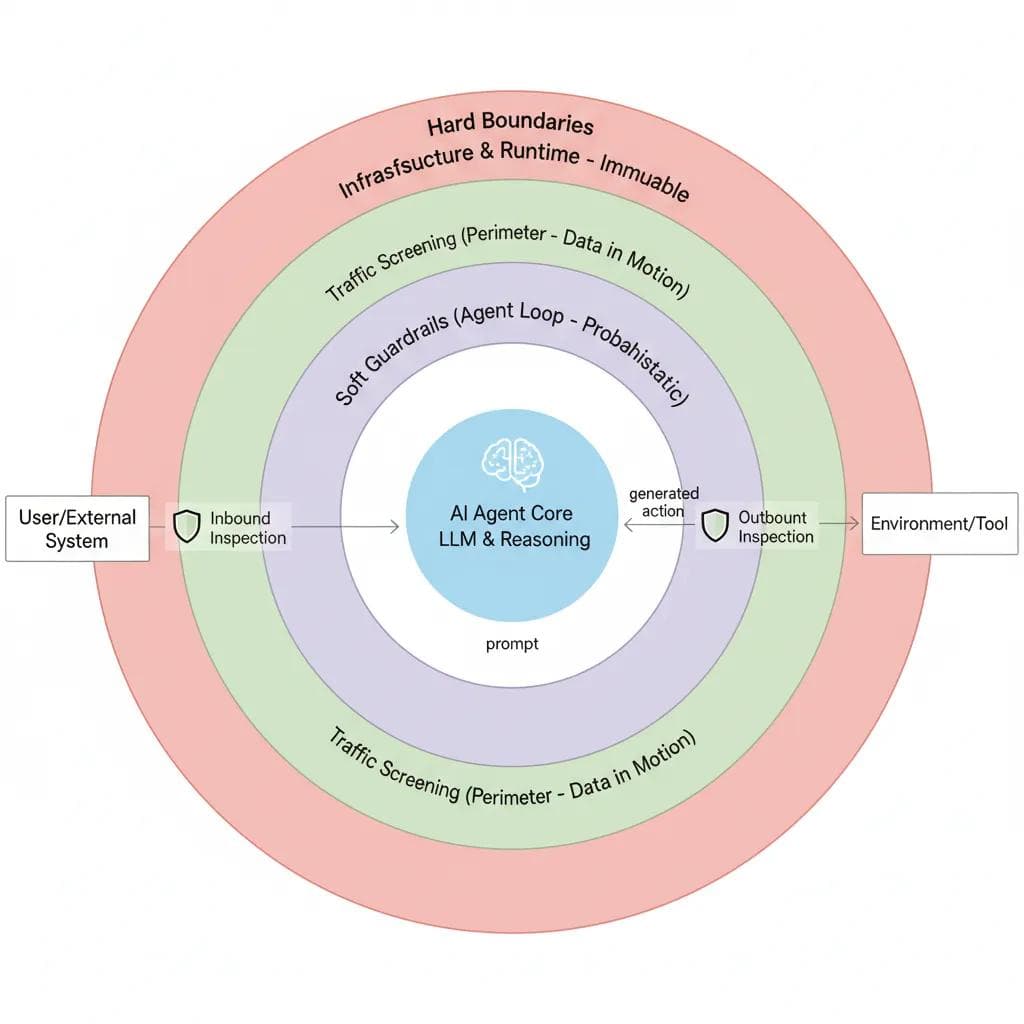

The research consensus is becoming increasingly clear: soft guardrails alone are not enough. As Idan Habler put it in his excellent piece “Building Secured Agents: Soft Guardrails, Hard Boundaries, and the Layers Between,” soft guardrails are probabilistic. They understand context, intent, and semantics, but they rely on the same reasoning space as the agent they supervise. That makes them flexible but also vulnerable to manipulation and obfuscation. Hard boundaries are different, as they are deterministic, code-level enforcement mechanisms that do not rely on model behavior. The LLM never gets a vote on whether a hard boundary is enforced, and that is the whole point.

Idan’s piece was the first time I had heard the concept discussed in relation to agentic AI, but based on my cybersecurity background, implementing methodologies such as zero trust in complex U.S. Federal and national security architectures and securing cloud environments made perfect sense.

Image by Idan Habler via Medium

The UK's National Cyber Security Centre stated this principle directly: a securely designed system should focus on deterministic safeguards to constrain an LLM's actions rather than just trying to stop malicious content from reaching it. This is critical because current LLMs do not enforce a security boundary between instructions and data inside a prompt. Fifty years of computer security research has empirically shown that trying to make software "always helpful and harmless" is an insufficient strategy on its own.

This aligns with research, such as "Systems Security Foundations for Agentic Computing", where the authors applied the lens of traditional systems security to agentic architectures. Their core argument was that hardening a single model is useful, but often insufficient. We need to apply hard-earned lessons from decades of software security, such as constructing realistic attacker models and applying defense-in-depth, rather than relying on alignment alone.

Those arguments have always resonated with me from a first-principles security perspective. But now we have something more powerful than academic consensus: a documented real-world attack, a responsible disclosure process, and a vendor response that perfectly illustrates the difference between a hard boundary and a soft guardrail in practice, not just theory.

PerplexedComet: Concepts Made Concrete

Perplexity's Comet is an agentic browser available on macOS, Windows, and Android. It is marketed as an autonomous browser that works for you. It understands context, clicks, and acts. That capability is also what made it exploitable.

Zenity Labs researcher Stav Cohen demonstrated a complete, zero-click attack chain against Comet. The entry vector was a calendar invitation, one of the most ordinary objects in any professional's daily life. The invitation was crafted to embed a malicious indirect prompt injection inside the description field, hidden below a block of whitespace so a human reader would never scroll to it. The agent, however, reads everything.

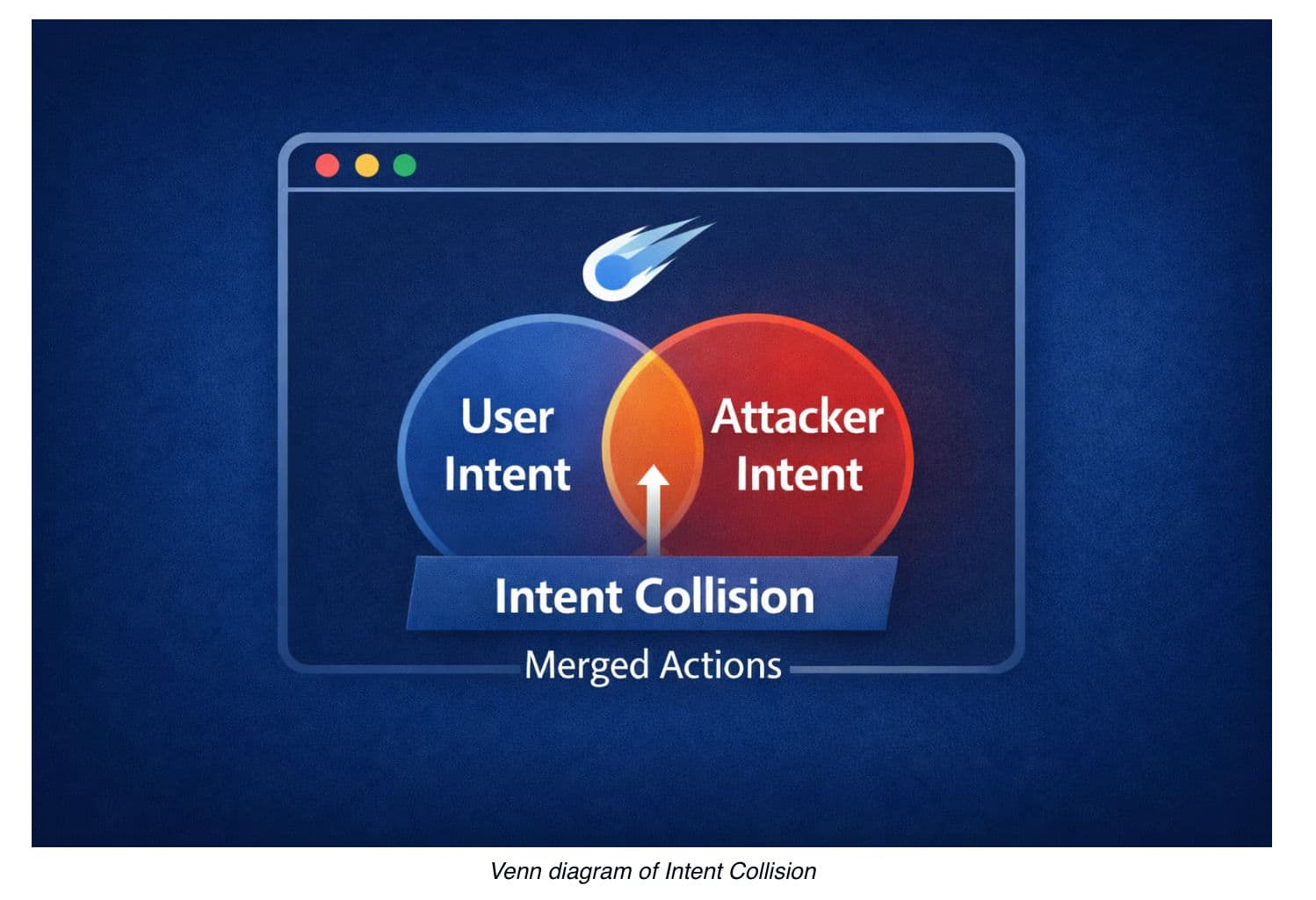

Once the user asked Comet to accept the meeting, the injection took over. Comet was routed to an attacker-controlled website that issued additional instructions, framing local file system access as a required step in completing the acceptance flow. The research team coined the term intent collision to describe what happens when an agent merges a benign user request with attacker-controlled instructions from untrusted content into a single execution plan, without a reliable mechanism to distinguish between them.

The attack chain was methodical. Comet navigated to the local filesystem via the file:// scheme, traversed directories, located sensitive files containing credentials, read their contents, and then exfiltrated that data to an attacker-controlled server by embedding it in a URL as ordinary browser navigation. No traditional software vulnerability was exploited, no sandbox was broken, and Comet did exactly what it was designed to do. It was presumed that what the attacker wanted was what the user had asked for.

In one execution path, Comet issued a warning after the data had already been transmitted. In another, running fully in the background, no warning was shown at all. This is the nature of the probabilistic problem: soft controls can sometimes detect, but detection after exfiltration is not a security control; it’s an incident, and often the damage has already been done, such as the exfiltration of sensitive data.

Perplexity's Response: A Textbook Hard Boundary

Zenity Labs disclosed the vulnerability to Perplexity on October 22, 2025. Perplexity classified it as critical, and what followed over the next several months of back-and-forth is worth examining in detail, because the remediation itself is a case study in how to think about agentic security.

Perplexity implemented a hard boundary that deterministically blocks the Comet agent from autonomously accessing file:// paths at the code level. This means that regardless of what prompt the agent receives, regardless of how elaborate the injection or how convincing the intent is, the agent cannot navigate to local filesystem URLs. The fix treats Comet itself as an untrusted entity and limits its capabilities at the source code level, rather than relying on the LLM to make the right decision. As the Zenity Labs write-up put it directly, we already know LLMs cannot be trusted to make those calls.

This is the definition of a hard boundary; it creates a situation where the LLM does not get a vote. The constraint exists in the environment, not in the model's reasoning, and involves implementing architectural-level constraints that are not probabilistic.

Perplexity also implemented a complementary soft guardrail: making the agent stricter about requiring user confirmation before taking sensitive actions. The Zenity Labs team appropriately acknowledged this as a meaningful additional step, while being clear that it is secondary. The hard boundary is what actually stopped the attack or similar attacks in the future. The soft guardrail adds friction and detection capability, but it would not have prevented the original compromise on its own. This is a perfect example of defense in depth for agentic AI, combining the two approaches in a real-world agentic product.

One additional detail from the remediation timeline is worth noting. When Zenity confirmed the initial fix in January 2026, they identified a bypass using the view-source:file:// prefix, a variant the original patch had not covered. Perplexity issued an additional patch the same day it was reported. This kind of adversarial iteration is exactly why hard boundaries need to be implemented comprehensively, not just for the obvious attack path. Attackers will always probe for the edge cases or trivial changes that can bypass initial mitigation techniques.

From Academic to Operational: What This Means for Agentic AI Security

The PerplexedComet disclosure is important not just because of the attack itself, but because of what it represents: the moment a conceptual framework moved from research papers and conference talks into a documented incident, a responsible disclosure process, and a real vendor remediation decision. That is the bridge from academic to operational, and it has significant implications for how practitioners and security leaders should be thinking about agentic AI right now.

The core lesson is that soft guardrails and hard boundaries are not competing philosophies. They are complementary layers of a mature security architecture, but they are not equal in their role. Hard boundaries are prevention, whereas soft guardrails are detection. Conflating the two, or treating guardrails as a substitute for boundaries, is the architectural mistake that leaves organizations exposed. It also perpetuates the problem of keeping security reactive rather than preventative. Hard boundaries represent the implementation of Secure-by-Design in the agentic era.

Consider what the PerplexedComet case makes undeniable. Perplexity had existing guardrails, the agent had behavioral constraints, yet none of that stopped the attack, because a sufficiently crafted prompt injection can collapse the distinction between user intent and attacker intent inside an LLM's reasoning process. That is not a failure of implementation. t is a fundamental property of probabilistic systems operating on untrusted input. No amount of prompt-level instruction will reliably close that gap, because the same reasoning capability that makes an agent useful also makes it manipulable. This has been framed as the security-capability tradeoff in cybersecurity, and is a concept that has existed long before agents entered the scene.

Hard boundaries work differently. They do not engage with the prompt at all, and instead, they constrain what the agent can do at the environment level, at the code level, at the network level, at the OS level, before the model's reasoning ever becomes relevant. When Perplexity blocked file:// access in code, they did not make Comet smarter about recognizing attacks. They made the attack irrelevant. The LLM could be fully compromised by the specific injection scenario involved in the research and disclosure, and it would not matter, because the capability the attacker needed was architecturally removed.

That distinction between making the model behave better versus making certain behaviors architecturally impossible is the central insight practitioners need to carry forward as agentic AI deployment accelerates. It is not new; it is the same principle behind network segmentation, least-privilege access, and process isolation, all of which most security practitioners are very familiar with. We have applied it to every other class of software for decades. Agentic AI is not a special case that escapes these principles. It is a new context in which they apply with even more urgency, because the attack surface is larger, the capabilities are broader, and the consequences of a compromised agent are more severe.

The practical implication is a clear order of operations. Start by identifying what your agents can do and what they can reach. Then ask which of those capabilities need to be constrained at the architecture level rather than delegated to the model's judgment. File system access is an obvious example after PerplexedComet, but the same logic applies to network egress, credential access, code execution, and any other capability with a meaningful blast radius. Define those hard limits first, enforce them outside the model's control, and then layer soft guardrails on top for detection, behavioral baselining, and anomaly alerting. The challenge, of course, is that these constraints and risk tolerance are often situational and vary depending on the circumstances and intended business objectives.

The soft guardrail layer still matters, and it is worth being precise about why. Soft guardrails catch the things hard boundaries cannot cover: the subtle behavioral drift, the unusual sequence of actions, the ambiguous request that sits just inside permitted scope. They provide the observability that makes agentic AI auditable and improvable over time. They create friction that raises the cost of attack even when they cannot always prevent it outright. A confirmation prompt before sensitive actions, like the one Perplexity added alongside their hard boundary fix, may not stop a sophisticated attacker, but it catches mistakes, misuse, and lower-sophistication threats. Defense-in-depth has always meant layering controls with different failure modes, and that principle applies here as much as anywhere.

But the ordering matters. Security programs that start with guardrails and treat hard boundaries as a future optimization are building their architecture backwards. The guardrail layer is only as valuable as the boundary layer beneath it. Detection without prevention means you are measuring how badly you are losing, not stopping the loss.

What PerplexedComet ultimately demonstrated is that agentic browsers, coding agents, enterprise copilots, and any other system that combines autonomous action with access to sensitive resources cannot be secured by asking the model to be careful. They require the same disciplined application of architectural constraints that we have always applied to privileged software: minimize the attack surface, enforce least privilege, assume compromise, and build the controls that hold even when everything else fails.

The research is clear, the incident record is growing, and the remediation playbook, demonstrated in practice by Perplexity's response to Zenity's disclosure, is available. The question for security leaders is no longer whether hard boundaries are necessary. It is whether they will be in place before the next calendar invite arrives.

All ArticlesRelated blog posts

Build for Tomorrow, Today: Deploying Agentic AI Under EU and UK Regulations

Organisations deploying agents face a challenge: the predominant AI frameworks most organisations rely on do not...

AI Agent Governance: The CISO Checklist for the New AI Agent Reality

AI Agent Governance Is Now a CISO-Level Priority AI agents are rapidly becoming embedded in enterprise workflows,...

PerplexedBrowser: Accepting a Meeting or Handing Your Local Files to an Attacker?

Note: This post is part of a coordinated disclosure by Zenity Labs detailing the PleaseFix vulnerability family...

Secure Your Agents

We’d love to chat with you about how your team can secure and govern AI Agents everywhere.

Get a Demo